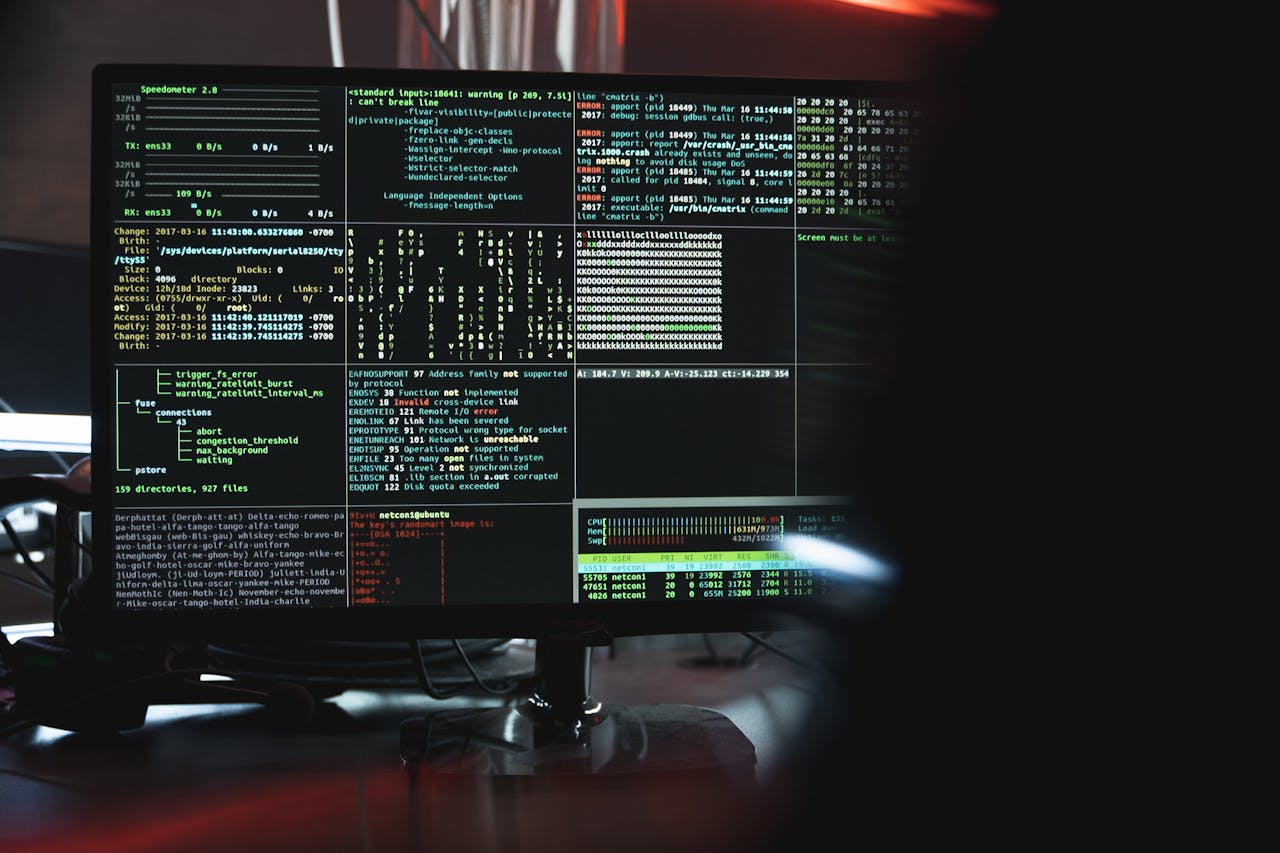

AI Pentesting Agents: Can Autonomous Red Teams Replace Human Hackers?

Stanford’s ARTEMIS agent outperformed 9 of 10 human pentesters on a live university network at $18/hour. Horizon3.ai has run 225,000 autonomous pentests. RunSybil just raised $40M. But the best human tester still scored 17% higher than the best AI, and agents miss GUI-based vulnerabilities almost entirely. This post breaks down the benchmarks, the tools, and where the line between AI and human offensive security actually sits in 2026.